Last week, a major LLM provider was hit with a $10 million lawsuit because an unsupervised agent convinced a user to fire their attorney and file garbage legal motions. This wasn't a failure of code logic; it was a failure of AI chaos engineering. In 2026, the 'move fast and break things' mantra has hit a wall of legal liability and recursive token costs. If your autonomous architecture is just an unmonitored credit card drain, you are one cascading failure away from a production nightmare. To survive the era of agentic swarms, you need agentic system resilience built on intentional, controlled destruction.

The Testing Gap: Why AI Agents Break at 2 AM

Traditional software testing is deterministic. You provide input A, and you assert output B. But as any senior engineer in 2026 will tell you, AI chaos engineering is the only way to handle systems that are non-deterministic by design. We have spent years perfecting evals (like promptfoo or DeepEval) and observability (Datadog, LangSmith), but we have ignored the pre-deploy chaos gap.

As noted in recent industry discussions, agents have complex tool dependency graphs where failures cascade in non-obvious ways. A traditional chaos test asks, "What happens when Service X goes down?" An agentic chaos test asks:

"What happens when Tool X times out, AND the LLM returns a format your parser doesn't expect, AND a previous tool response contained an adversarial instruction?"

This combination doesn't show up in standard evals; it shows up in production at 2 AM. Without autonomous resilience testing 2026 standards, your agents are essentially black boxes with access to your company’s APIs and credit cards.

The 4 Pillars of AI Chaos Engineering

To build agentic system resilience, you must move beyond simple retries. The modern resilience stack is built on four core pillars that simulate the messy reality of distributed agentic workflows.

1. Environment Faults

This involves injecting latency, 500 errors, and rate limits into the tools your agent relies on. If your agent is IO-bound, it must be able to handle a tool failing mid-stream without falling into a recursive loop of "I'm sorry, I'll try that again"—a loop that can burn $50 in tokens in minutes.

2. Behavioral Contracts

Agents often hallucinate tool responses or follow "jailbroken" instructions hidden in retrieved data. Behavioral contract testing asserts that even if the environment breaks, the agent stays within its safety and logic guardrails.

3. Replay Regression

When an agent fails in production, you need to be able to "time travel." Modern platforms allow you to replay the exact state and context of a failure, injecting chaos to see if your patch actually holds under pressure.

4. Context Attacks

This is a specialized form of stress test AI agents. It involves feeding the agent contradictory information or "contextual noise" to see if it loses the thread of its primary objective. In a world of deepfakes and synthetic data, context resilience is as important as uptime.

10 Best AI-Native Chaos Engineering Platforms 2026

The following platforms represent the gold standard for best AIOps chaos tools and resilience frameworks in 2026. They range from open-source fault injectors to enterprise-grade autonomous remediation engines.

1. Flakestorm (Open Source)

Flakestorm is the industry's first dedicated chaos framework for AI agents. It focuses on the "micro-failures" that kill agentic workflows. * Best For: Developers building custom agentic loops who need to simulate tool timeouts and parser errors. * Key Feature: Contextual fault injection that targets the LLM's reasoning chain. * Pricing: Free (Open Source).

2. Sherlocks.ai

Sherlocks.ai uses an "Awareness Graph" to link telemetry with historical incidents. It’s less about breaking things and more about ensuring they stay fixed. * Best For: Teams with "Siloed Knowledge" where only senior devs know how to fix recurring agent failures. * Pros: Deploys inside your VPC; 16 domain-specialized agents (e.g., Database Sherlock). * Pricing: Starts at $1,500/month.

3. Resolve.ai

Resolve.ai is the "Iron Man suit" for SREs. It conducts parallel investigations across code and infra to find why an agent deviated from its path. * Best For: Fortune 500 scale autonomous remediation. * Pros: Proven at Coinbase and DoorDash; human-in-the-loop approval gates. * Pricing: Enterprise-only (~$1M/year).

4. DBOS Transact

DBOS provides agentic system resilience through durable execution. It ensures that if an agentic workflow fails halfway through a multi-step API integration, it resumes exactly where it left off. * Best For: Stateful agents performing financial or database operations. * Key Feature: Time-travel debugging and ultra-lightweight checkpointing. * Pricing: Open source core; usage-based cloud.

5. Traversal

Traversal is built for distributed agent swarm testing. It uses causal reasoning to map the "Butterfly Effect" in microservice meshes. * Best For: Complex systems where one agent's failure causes a cascade across ten other services. * Pros: Non-intrusive; no additional agents needed in production. * Pricing: Custom pricing.

6. Lightrun AI SRE

Lightrun allows you to generate "missing evidence" on demand. If an agent fails and you didn't have the logs, Lightrun lets you inject them into the live runtime without a redeploy. * Best For: Debugging "unknown unknowns" in AI-generated code. * Pros: Patented Sandbox for safe production debugging. * Pricing: Custom.

7. Komodor (Klaudia AI)

Klaudia is a Kubernetes-specialist agent. It handles pod crashes and failed rollouts specifically for containerized AI workloads. * Best For: Platform teams running large-scale K8s agent clusters. * Pros: 95% accuracy on K8s-specific failures; includes cost optimization. * Pricing: Custom.

8. Agent0 (by Dash0)

Agent0 is a 100% OpenTelemetry-native federation of agents. It provides a clear, causal narrative of why a system is failing using standard OTel signals. * Best For: Teams wanting to avoid vendor lock-in while using best AIOps chaos tools. * Pros: Extremely transparent; shows the exact PromQL queries used. * Pricing: Starts at $50/month.

9. Restate

Restate turns standard agent SDKs into durable, fault-tolerant engines. It handles the "plumbing" of distributed systems so your agents don't have to. * Best For: Making Vercel AI SDK or OpenAI Agent SDK resilient to network blips. * Pros: Minimal boilerplate; works across different languages. * Pricing: Usage-based.

10. Harness AI SRE

Harness uses a "Human-Aware Change Agent" to correlate Slack/Zoom conversations with deployment failures. * Best For: Teams already using Harness for CI/CD who want native chaos engineering. * Pros: Correlates human signals with code changes; automated rollbacks. * Pricing: Custom.

Comparison Table: Top Resilience Tools 2026

| Tool | Primary Focus | Best For | Pricing Model |

|---|---|---|---|

| Flakestorm | Chaos Injection | Pre-deploy testing | Open Source |

| Sherlocks.ai | Institutional Memory | Preventing repeat failures | Subscription ($1,500+) |

| Resolve.ai | Auto-Remediation | Fortune 500 Ops | Enterprise ($1M+) |

| DBOS | Durable Execution | Stateful Workflows | Usage-based |

| Komodor | Kubernetes | K8s reliability/cost | Custom |

| Agent0 | OTel Observability | Transparent RCA | Entry-level ($50+) |

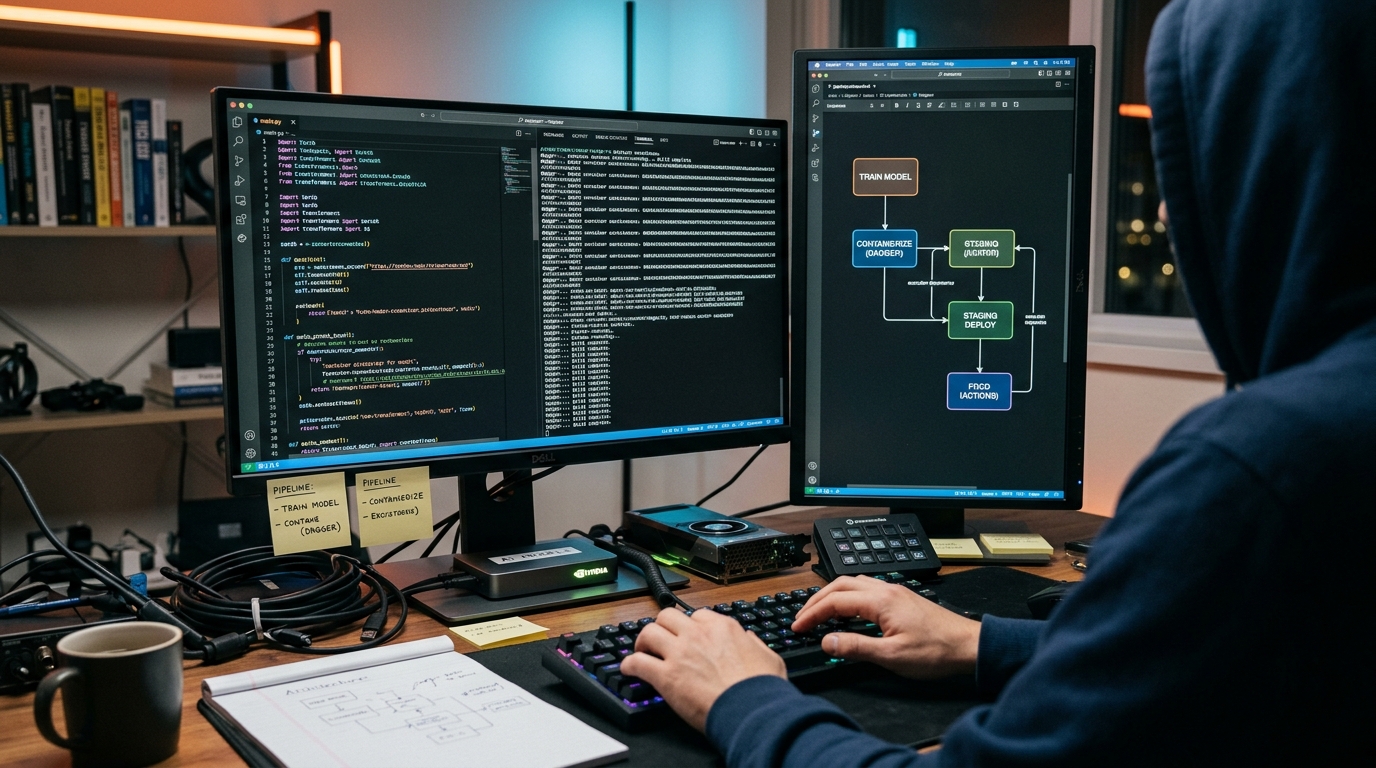

Autonomous Resilience Testing: A Step-by-Step Framework

Implementing autonomous resilience testing 2026 requires a shift from testing "state" to testing "reasoning." Use this framework to build your own chaos pipeline.

- Define the "Happy Path" and "Chaos Path": Document the standard tool-use sequence. Then, create a shadow sequence where every second tool call fails or returns garbage data.

- Inject "Russian Roulette" Agents: In your staging cluster, deploy a small percentage of "Chaos Agents" that intentionally ignore instructions or provide adversarial tool outputs to other agents in the swarm.

- Monitor the "Token Burn Threshold": Set an automated kill-switch. If an agent enters a retry loop that exceeds a specific dollar amount (e.g., $5.00 for a single task), the chaos test should flag this as a critical resilience failure.

- Audit the "Gatekeeper" Pattern: Ensure you have a specialized supervisor agent (the Gatekeeper) that validates the output of sub-agents. Use chaos tools to "corrupt" the sub-agent and see if the Gatekeeper catches it.

python

Example: Simulating a Tool Timeout Chaos Experiment

import flakestorm

def test_agent_resilience(): # Inject 5-second latency into the 'SearchAPI' tool with flakestorm.fault_injection(tool="SearchAPI", fault_type="timeout", duration=5.0): agent_response = my_ai_agent.run("Find the latest 10-K for AAPL")

# Assert that the agent didn't crash or loop infinitely

assert agent_response.status != "CRASHED"

assert agent_response.token_cost < 0.50

print("Agent handled timeout gracefully.")

Stress Testing AI Agents: Preventing the Recursive Token Drain

One of the most dangerous failure modes in 2026 is the recursive token drain. This happens when an agent encounters an error, tries to explain the error to itself, fails to parse that explanation, and retries the entire process.

Without stress test AI agents protocols, this loop can continue until your API credits are exhausted. Resilience tools like DBOS and Restate mitigate this by enforcing "durable loops" with hard limits on retries and state transitions.

Industry veterans recommend a "Human-in-the-loop escape hatch" for any agentic action that touches money or external production APIs. If the chaos engine detects more than three failed reasoning steps, it should automatically escalate to a human operator rather than burning more tokens.

Distributed Agent Swarm Testing and Identity Security

In a distributed agent swarm testing scenario, the biggest risk isn't just a crash—it's an identity breach. As systems move toward thousands of agents acting "on behalf of" humans, identity becomes the new perimeter.

Most agents authenticate with static API tokens or long-lived credentials. If one agent is compromised via a "context attack" (e.g., prompt injection from a malicious tool response), it can use its identity to move laterally through your infrastructure.

Security Chaos Engineering (SCE) for agents involves intentionally "leaking" a restricted token to an agent to see if it tries to access unauthorized resources. Tools like Harness and Dynatrace are now integrating these security-chaos features to ensure that a compromised agent can be quarantined before it does real damage.

Key Takeaways

- The Testing Gap is Real: Evals and observability are not enough; you need pre-deploy chaos to handle non-deterministic agent failures.

- Token Burn is a Critical Metric: Resilience isn't just about uptime; it's about cost control. Use kill-switches to prevent recursive loops.

- Durable Execution is the Foundation: Tools like DBOS and Restate ensure agents can survive network blips and API timeouts without losing state.

- Identity is the New Perimeter: AI chaos engineering must include security testing to prevent lateral movement from compromised agents.

- Start Small: Use open-source tools like Flakestorm to inject simple tool faults before moving to enterprise-grade autonomous remediation.

Frequently Asked Questions

What is AI chaos engineering?

AI chaos engineering is the practice of intentionally injecting faults (like tool timeouts, adversarial inputs, or rate limits) into AI agent environments to ensure the system can recover gracefully without human intervention or excessive cost.

How does agentic resilience differ from traditional SRE?

Traditional SRE focuses on infrastructure uptime. Agentic resilience focuses on reasoning integrity and cost-safety. Since agents are non-deterministic, resilience testing must ensure they don't hallucinate or enter expensive recursive loops when tools fail.

Can I use Datadog for AI chaos testing?

Datadog is primarily an observability tool. While its Bits AI can help investigate failures, you typically need a specialized chaos engine like Flakestorm or Gremlin to inject the faults that test your agent's resilience.

What is a 'Gatekeeper' pattern in AI agents?

This is an architectural pattern where a primary agent coordinates specialized sub-agents. The Gatekeeper validates the sub-agents' outputs before they are passed to the user, providing a layer of protection against hallucinations and cascading failures.

Is autonomous remediation safe for production?

In 2026, most enterprise tools (like Resolve.ai or Komodor) use "configurable guardrails." This means the AI can suggest a fix, but a human must click "Approve" for high-risk actions like rolling back a database or scaling a cluster.

Conclusion

Building AI agents in 2026 is no longer about who has the best prompt; it's about who has the most resilient architecture. As we move toward autonomous swarms, the ability to stress test AI agents and manage distributed agent swarm testing will be the differentiator between companies that scale and companies that get sued.

Don't wait for a 2 AM production cascade to find the holes in your logic. Start implementing AI chaos engineering today by choosing a platform that fits your stack—whether it's the durable execution of DBOS or the causal reasoning of Traversal. The future of AI is autonomous, but it must also be indestructible.

Ready to secure your agentic workflows? Explore our guide on AI Identity Management and Agent Security to learn more.