By 2026, it is estimated that over 90% of AI models fail to reach production, not because of poor modeling, but due to fragile infrastructure and fragmented delivery pipelines. The rise of 'vibe coding'—where LLMs generate vast swaths of application logic—has created a massive 'last mile' problem: how do we securely review, test, and deploy this high-entropy code without burning out our DevOps teams? Traditional Jenkins files and manual Terraform scripts are no longer enough. To survive the era of autonomous software, teams are shifting toward AI CI/CD platforms that treat deployment not as a static script, but as an agentic, self-healing process. This guide explores the elite tools redefining the software lifecycle through agentic DevOps tools and AI-powered deployment automation.

The Shift to Agentic DevOps: Why Traditional CI/CD is Breaking

Traditional CI/CD was built for a world where humans wrote code slowly and predictably. Today, we face a reality where AI agents can generate thousands of lines of code in seconds. This creates what engineers on Reddit's r/devops call 'high entropy' environments. If your pipeline isn't AI-native, you're essentially trying to manage a firehose with a garden hose.

As one senior engineer noted in a recent community discussion:

"Specifically, errors with agents compound over time. And what is DevOps but a dependent chain of actions? You need the VPC before the container; the container before the code. A general-purpose agent can't get through these steps without a specialized platform."

AI CI/CD platforms solve this by introducing autonomous CI/CD pipelines that understand the context of the code they are deploying. They don't just run a test suite; they use LLMs to analyze logs, predict deployment failures, and even suggest (or execute) rollbacks based on real-time observability data.

Critical Features of AI-Native CI/CD Platforms in 2026

Before choosing a platform, you must ensure it can handle the specific demands of LLM-driven software delivery. Generic PaaS solutions often fall short when it comes to the specialized hardware and iterative nature of AI.

- GPU Orchestration: Modern AI apps require A100s, H100s, or the newer B200 accelerators. The platform must handle GPU scheduling and driver management automatically.

- Agentic Model Context Protocol (MCP) Support: The ability for an AI agent to 'read' your infrastructure state and 'write' changes via secure, restricted protocols.

- Self-Healing Pipelines: AI-powered log analysis that identifies 'drift' or performance degradation and triggers automated fixes.

- Multi-Cloud Flexibility: The ability to move workloads between AWS, GCP, and private clouds to optimize for GPU availability and cost.

- Secure Code Review Agents: Automated 'tollgates' that use LLMs to check AI-generated code for security vulnerabilities before it hits production.

| Feature | Traditional CI/CD | AI-Native CI/CD (2026) |

|---|---|---|

| Hardware | Standard CPU/RAM | Automatic GPU Provisioning (H100/B200) |

| Scaling | Rules-based (CPU > 80%) | Predictive (Traffic & Model Latency) |

| Deployment | Manual/Scripted | Agentic/Goal-Oriented |

| Error Handling | Fail & Alert | Root Cause Analysis & Auto-Rollback |

1. Northflank: The Full-Stack Powerhouse

Northflank has emerged as a leader in the 2026 landscape by bridging the gap between traditional web services and heavy-duty AI workloads. It is a full-stack platform that allows you to deploy LLMs, vector databases, and inference APIs alongside your standard application code.

Why it’s a top pick: Northflank offers native support for high-performance NVIDIA accelerators (B200, H200, H100). Unlike other platforms that force you to manage complex Kubernetes clusters to get GPU access, Northflank abstracts the infrastructure. You get a Git-to-production workflow that feels like Heroku but has the power of a dedicated AI lab.

- Multi-Cloud/BYOC: You can deploy on Northflank's managed cloud or bring your own cloud (AWS, GCP, Azure) while maintaining the same workflow.

- One-Click AI Stacks: Pre-configured templates for Qwen, DeepSeek, and Ollama allow for rapid deployment.

- Transparent Pricing: Per-second billing for compute and storage, which is critical when running expensive GPU workloads.

2. AWS SageMaker: The Enterprise Standard

For teams already deep in the Amazon ecosystem, AWS SageMaker remains the heavyweight champion of AI-powered deployment automation. It is an end-to-end platform that covers everything from data labeling to real-time inference endpoints.

Key Features: * SageMaker Pipelines: Purpose-built CI/CD for machine learning that automates every step of the ML lifecycle. * Model Monitor: Automatically detects concept drift in production models, alerting DevOps teams when a model's performance begins to degrade. * Integration: Deep hooks into S3, Lambda, and AWS Bedrock.

The Trade-off: As noted in Reddit discussions, SageMaker has a steep learning curve. It is powerful but requires dedicated ML platform engineers to manage effectively. It’s not a "vibe coding" tool; it’s an enterprise engine.

3. Google Vertex AI: The AutoML Leader

Google Vertex AI is the go-to for teams that want the best-in-class AutoML capabilities. Google has integrated its Gemini models directly into the platform, allowing for best AI for continuous integration workflows where the platform itself suggests model optimizations.

Why it stands out: * Vertex AI Search and Conversation: Simplifies the creation of RAG (Retrieval-Augmented Generation) systems. * Unified UI: Manages datasets, notebooks, and pipelines in a single pane of glass. * GCP Ecosystem: Native integration with BigQuery and Dataflow makes data ingestion seamless.

4. Azure Machine Learning: The Corporate Integrator

Microsoft’s Azure Machine Learning is the natural choice for organizations already utilizing the Microsoft 365 and Azure DevOps suites. It provides the strongest enterprise governance and security features in the market.

Capabilities: * MLOps with Azure DevOps: Seamlessly integrates with existing Azure CI/CD pipelines. * Responsible AI Dashboard: Built-in tools for fairness, interpretability, and error analysis. * Hybrid Support: Strongest support for on-premises and edge deployments via Azure Arc.

5. GitHub Actions + Copilot Extensions: The Developer Favorite

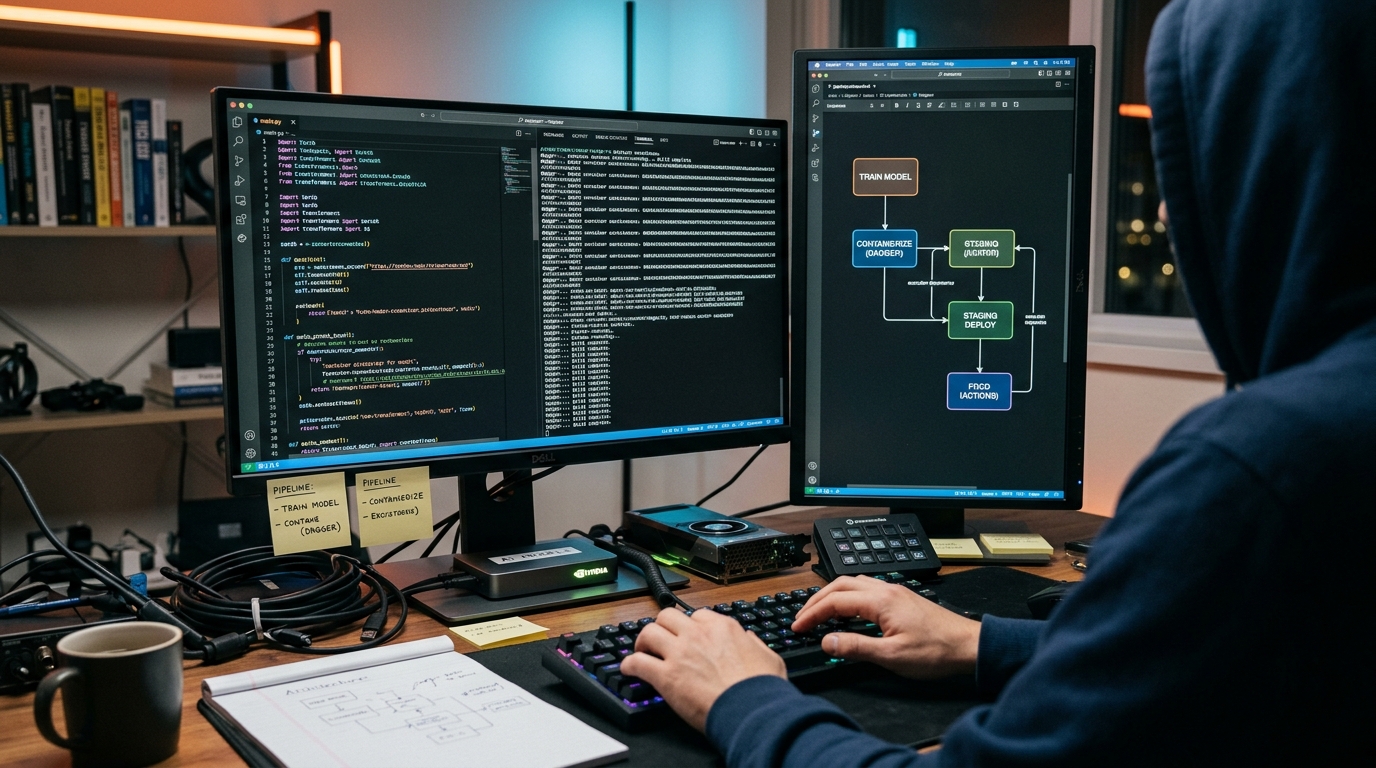

In 2026, GitHub Actions has evolved from a simple runner into a core component of the agentic DevOps tools ecosystem. By combining Actions with GitHub Copilot Extensions and GitHub Agents, teams can now build pipelines that literally write themselves.

The Workflow: 1. AI Code Generation: Copilot writes the feature. 2. AI-Driven PR Review: An agent reviews the PR, checking for security flaws and performance regressions. 3. Automated Deployment: Actions deploy the code to a preview environment, where an AI-driven smoke test is performed.

This "Git-to-Production" loop is the fastest way for startups to move, though it lacks the deep GPU orchestration of specialized platforms like Northflank.

6. GitLab CI/CD: The Unified DevSecOps Platform

GitLab continues to push the boundaries of "Single Application" DevOps. Their AI-powered features, like GitLab Duo, assist in every stage of the pipeline, from writing the .gitlab-ci.yml file to explaining why a build failed.

Strengths: * Security First: Built-in vulnerability scanning for AI models and training data. * Self-Managed Options: Crucial for fintech and healthcare companies that cannot use SaaS for sensitive AI workloads. * Observability: Integrated monitoring that links model performance directly back to the code commit.

7. Hugging Face Inference Endpoints: The Prototyping King

If you are working with open-source models (Llama 3, Mistral, etc.), Hugging Face Inference Endpoints is the path of least resistance. It allows you to turn any model on the Hub into a production-ready API in seconds.

Best For: * Rapid Prototyping: Ideal for testing whether a specific model fits your use case without setting up infrastructure. * Serverless Scaling: Automatically scales based on request volume, so you don't pay for idle GPUs. * Security: Offers private endpoints to ensure your data never leaves your VPC.

8. Databricks Lakehouse: The Big Data Specialist

For AI applications that rely on massive datasets, Databricks is unrivaled. It combines the best of data lakes and data warehouses with a unified ML platform.

Key Advantage: Databricks' acquisition of MosaicML has allowed them to offer deep optimization for model training and deployment. Their Unity Catalog provides a single governance layer for data and AI assets, which is a major requirement for compliance in 2026.

9. BentoML: The Open-Source Framework

BentoML is not a cloud platform per se, but a framework that simplifies the "packaging" of AI models for deployment. It is the core of many autonomous CI/CD pipelines because it standardizes how models are served.

Why Developers Love It: * Framework Agnostic: Works with PyTorch, TensorFlow, Scikit-Learn, and more. * BentoCloud: Their managed service provides a serverless experience for deploying BentoML-packaged models. * Scalability: Designed to handle high-throughput inference with ease.

10. Replicate: The Experimentation Hub

Replicate makes it incredibly easy to run machine learning models in the cloud with just a few lines of code. While it may not have the enterprise features of AWS or the full-stack depth of Northflank, it is the favorite for developers who want to "just make it work."

Highlights: * Massive Library: Access to thousands of community models for image generation, text, and audio. * Pay-as-you-go: Only pay for the milliseconds the model is actually running. * Simple API: No need to understand Docker or Kubernetes; just send a JSON request.

The MCP Revolution: Connecting LLMs to Infrastructure

A major trend in 2026 is the adoption of the Model Context Protocol (MCP). As discussed in the DevOps community, MCP acts as a secure bridge between AI agents and your cloud infrastructure.

Instead of giving an AI agent full admin access to your AWS account (a "terrible idea," according to most senior engineers), MCP allows for a read-only or policy-restricted interface.

How it works in CI/CD: - The AI agent asks: "Why did the staging deployment fail?" - The MCP server queries the Kubernetes logs and Terraform state. - The agent responds: "The ingress controller is missing a TLS secret. Would you like me to generate a PR to fix the Terraform module?"

This approach reduces entropy and keeps the human engineer in control while leveraging the speed of AI agents.

Managing the 'AI Intern': Human-in-the-Loop Strategies

One of the most insightful analogies from the Reddit research is viewing AI in DevOps as a "technically capable intern with subpar listening skills and no common sense."

To successfully use AI-powered deployment automation, you must implement the following safeguards:

- Strict Tollgates: Never allow an AI to merge code to production without human approval. Use the AI to prepare the release, not execute it.

- Policy as Code: Use tools like Open Policy Agent (OPA) to define what the AI can and cannot do. If the AI suggests opening port 22 to the world, the policy engine should block it automatically.

- Context Injection: AI agents are only as good as the data they have. Feed your CI/CD agents your documentation, previous incident reports, and architecture diagrams to improve their reasoning.

- Verification Steps: After an AI-driven deployment, have a separate, non-AI automated suite verify the system's health. Don't let the intern check their own work.

Key Takeaways

- Agentic DevOps is the new standard: 2026 is the year of goal-oriented deployment rather than script-oriented deployment.

- GPU availability is the bottleneck: Platforms like Northflank and RunPod are winning because they provide easier access to the hardware needed for LLMs.

- Human-in-the-loop is mandatory: Treat AI agents as high-speed assistants, not replacements for senior engineers.

- MCP is the bridge: The Model Context Protocol is essential for safely connecting LLMs to production infrastructure.

- Full-stack matters: The best platforms handle the model and the surrounding infrastructure (databases, APIs, caching).

Frequently Asked Questions

What is an AI-native CI/CD platform?

An AI-native CI/CD platform is a software delivery system designed specifically for the unique needs of AI applications. Unlike traditional CI/CD, these platforms offer native GPU orchestration, automated model versioning, and AI-driven observability to manage the high-entropy code generated by LLMs.

How do agentic DevOps tools differ from traditional automation?

Traditional automation follows a rigid, step-by-step script (e.g., "Run test, then build, then deploy"). Agentic DevOps tools are goal-oriented. You give the agent a goal (e.g., "Deploy this feature to staging and ensure latency stays under 200ms"), and the agent determines the necessary steps, handles errors, and adjusts configurations autonomously.

Can AI replace DevOps engineers in 2026?

No. While AI can automate routine tasks like writing boilerplate Terraform or parsing logs, it lacks the high-level system architecture knowledge and business context required for complex infrastructure decisions. The role of the DevOps engineer is shifting from "builder" to "orchestrator" and "policy-maker."

Is it safe to give AI agents access to my AWS/GCP account?

Only with strict guardrails. Experts recommend using the Model Context Protocol (MCP) with read-only permissions for information gathering. For write actions, agents should submit Pull Requests or execute commands in isolated sandbox environments that require human approval before reaching production.

What are the best GPUs for AI deployment in 2026?

The NVIDIA B200 (Blackwell) and H100/H200 (Hopper) are the current gold standards for high-performance inference. For smaller workloads or cost-sensitive projects, the L40S or A10 offer a great balance of performance and price.

Conclusion

The transition to AI CI/CD platforms is no longer optional for teams building at the speed of light. As we move further into 2026, the complexity of LLM-driven software delivery will only increase. By leveraging agentic DevOps tools and platforms like Northflank, AWS SageMaker, or GitHub Actions, you can transform your deployment pipeline from a bottleneck into a competitive advantage.

Remember: the goal isn't just to automate; it's to automate smarter. Keep your human engineers in the loop, enforce strict security policies, and choose a platform that can handle the heavy lifting of GPU orchestration. The future of software is autonomous—is your pipeline ready?

Looking to optimize your team's output? Explore our guides on developer productivity and AI writing tools to stay ahead of the curve.