In early 2026, a single announcement from Anthropic regarding a new set of 'Legal Skills' for Claude sent shockwaves through the Nasdaq, wiping 7% off the value of established software giants in a single afternoon. This market volatility highlights a terrifying reality for modern engineering teams: in the era of generative AI, a single unmanaged feature release can disrupt entire industries—or destroy your brand's credibility. To survive, teams are moving away from manual toggles toward AI feature flag tools 2026 and agentic feature management systems that can autonomously detect hallucinations and trigger millisecond rollbacks.

Traditional feature flagging—the simple act of wrapping code in an if/else statement—is officially dead. Today, we are managing non-deterministic outputs, shifting LLM context windows, and 'vibe coding' environments where the AI itself often suggests the features it wants to enable. If you aren't using automated feature rollouts AI to monitor these agentic workflows, you aren't just shipping code; you're shipping liabilities.

The Shift to Agentic Feature Management

In 2026, the industry has transitioned from 'Feature Management' to agentic feature management. This means the system doesn't just wait for a developer to flip a switch; it uses AI agents to monitor telemetry, sentiment analysis, and model performance to decide who sees what feature.

As noted in recent industry discussions, the rise of specialized AI 'skills'—like the Anthropic legal plugin—has proven that general-purpose models are now competing directly with vertical SaaS. When a model update occurs, the 'wrapper' companies that don't have robust dynamic AI feature toggles in place are the first to suffer. If your application relies on an LLM, your feature flags must now account for:

- Model Latency Spikes: Automatically falling back to a smaller, faster model (e.g., from Claude 4.5 Opus to Haiku) if latency exceeds 500ms.

- Hallucination Thresholds: Disabling a feature if the AI's confidence score drops below a certain percentile.

- Cost Governance: Toggling off expensive agentic workflows for free-tier users in real-time.

This isn't just about A/B testing anymore; it's about building a 'validation architecture' where AI explores freely but is gated by manual or automated validation before reaching the end user.

1. LaunchDarkly: The Enterprise Standard for AI Workflows

LaunchDarkly remains the undisputed leader in the space, but in 2026, it has pivoted heavily toward agentic feature management. Their platform now includes specialized triggers for LLM performance, allowing teams to manage feature flagging for LLM apps at a massive scale.

LaunchDarkly’s 'AI Insights' engine can ingest data from your observability stack (like Datadog or New Relic) and automatically roll back a feature if it detects a correlation between a new flag and an increase in LLM token costs. This is critical for enterprise teams where a runaway agentic loop could cost thousands of dollars in minutes.

Why LaunchDarkly for AI in 2026?

- Sophisticated Targeting: Granular control over which user segments receive specific AI model versions.

- Real-Time Flag Evaluation: Millisecond propagation across global edge networks.

- Guardrails: Automated kill-switches based on custom AI performance metrics.

2. Statsig: The Data-Driven Powerhouse for LLM Apps

Statsig has become the go-to for AI-powered A/B testing platforms. While other tools focus on the 'toggle,' Statsig focuses on the 'why.' In 2026, their integration with data warehouses like Snowflake and BigQuery allows for deep analysis of how AI features impact long-term user retention.

For teams building agentic workflows, Statsig provides a 'Pulse' view that shows exactly how a new AI agent is performing compared to a control group. Are users actually completing tasks faster, or are they just spending more time 'chatting' with a hallucinating bot?

Statsig Key Features:

- Advanced Statistical Modeling: Understand if an AI feature's success is statistically significant.

- ML-Driven Insights: Automatically identifies which user cohorts are most receptive to new AI tools.

- Warehouse Native: No need to send your sensitive AI telemetry to a third-party server; keep it in your own stack.

3. ConfigCat: Tailored for Android and Mobile AI Safety

ConfigCat has carved out a niche as the best tool for Android mobile development teams. As AI agents move onto mobile devices (Edge AI), managing feature rollouts on fragmented hardware becomes a nightmare. ConfigCat simplifies this with a straightforward, developer-friendly interface.

As mentioned in the LatticeOcean research, ConfigCat is ideal for 'bold bets.' If you are launching a new mobile-based AI assistant, ConfigCat allows you to isolate that feature and remotely disable it if it causes battery drain or overheating—common issues with local LLM execution in 2026.

| Feature | ConfigCat Advantage |

|---|---|

| Best For | Mobile & Android Teams |

| Primary Strength | Simplicity and Speed |

| AI Use Case | Remote control of local LLM features |

| Pricing | Highly competitive for small-to-mid teams |

4. GrowthBook: Open-Source Experimentation for 2026

For teams that demand transparency and avoid vendor lock-in, GrowthBook is the premier open-source choice. In the world of AI feature flag tools 2026, GrowthBook stands out by allowing developers to customize their experimentation logic entirely.

GrowthBook is particularly popular among the 'vibe coding' community—developers who build rapidly using AI prompts. Because it’s open-source, you can bake GrowthBook's SDK directly into your agentic frameworks, allowing the agents themselves to query their own feature states to adjust their behavior.

5. Split: Performance Monitoring Meets AI Toggles

Split (now part of Harness) excels at the intersection of performance and feature management. In 2026, Split’s 'Instant Rollback' feature is fueled by AI telemetry. If a new agentic feature management rollout causes a 5% increase in error rates, Split doesn't just alert you—it kills the flag before your Slack even pings.

This is essential for feature flagging for LLM apps, where 'errors' aren't always 500 codes. Sometimes an error is a subtle shift in the tone of an AI response. Split’s ability to monitor these nuanced metrics makes it a top-tier choice.

6. Unleash: The Choice for Self-Hosted AI Governance

As discussed in the Reddit threads regarding European regulations and the AI Act, data residency is a massive hurdle. European legal tech firms cannot simply upload sensitive data to US-based clouds. Unleash solves this by being a powerful, enterprise-grade, self-hosted feature flag platform.

Unleash for EU Compliance:

- On-Premise Deployment: Keep your feature flag logic and user data behind your own firewall.

- Governance: Strict audit logs and permission sets for large legal and healthcare teams.

- Custom Strategies: Define rollout rules based on complex regulatory requirements.

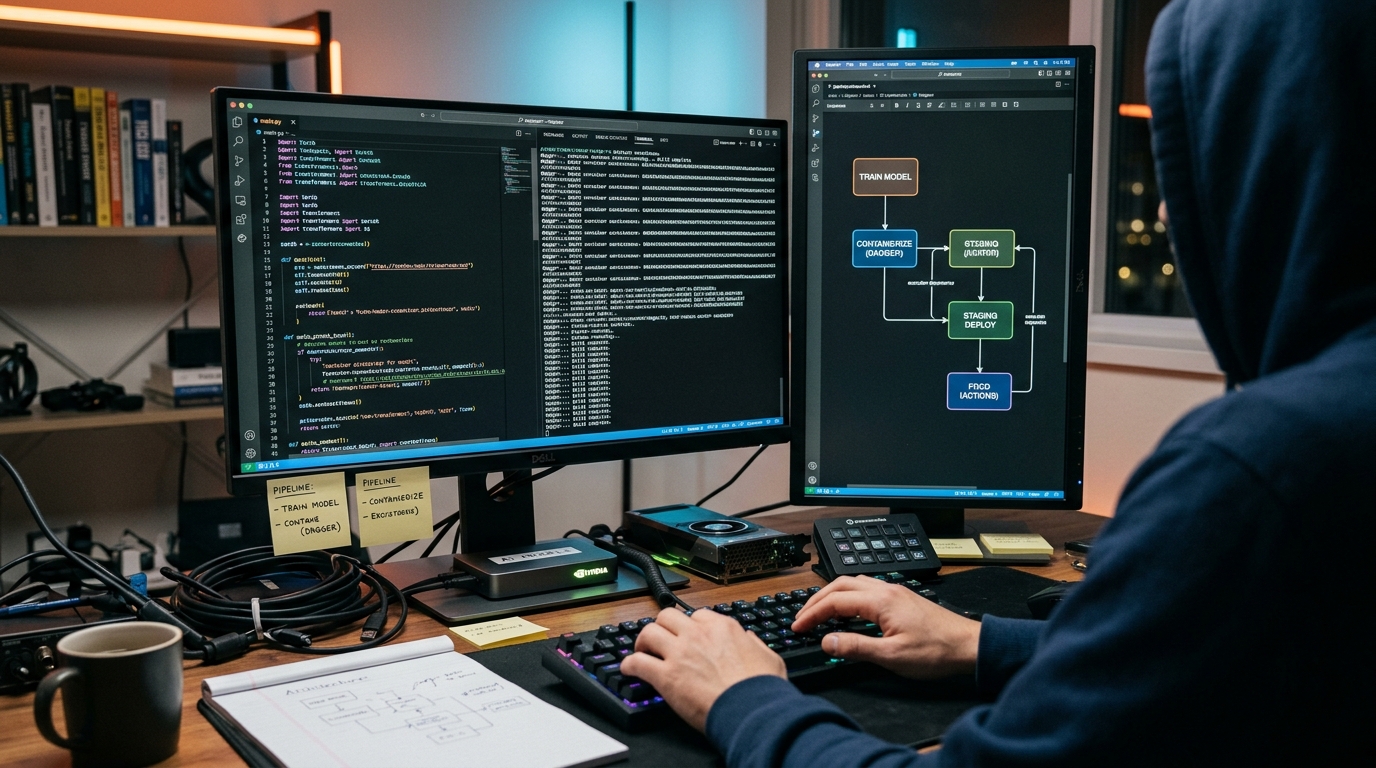

7. Harness: Orchestrating Agentic Rollouts in CI/CD

Harness takes a platform-wide approach, integrating feature flags directly into the CI/CD pipeline. For teams practicing automated feature rollouts AI, Harness provides a 'Pipeline as Code' experience where feature flags are just another step in the deployment orchestration.

In 2026, Harness uses AI to predict the success of a rollout before it even starts. By analyzing past deployment data, it can warn developers if a new feature flag is likely to conflict with existing agentic workflows.

8. DevCycle: Developer-First Flagging for Modern Stacks

DevCycle is built for speed. Its 'Clean Code' approach ensures that feature flags don't become technical debt. In the fast-paced world of AI development, where models are swapped weekly, DevCycle’s ability to manage flag lifecycles is a lifesaver.

Their SDKs are optimized for the latest frameworks, including those used for RAG (Retrieval-Augmented Generation) and multi-agent systems. If you're building with LangChain or CrewAI, DevCycle’s integration is seamless.

9. Flagsmith: Unified SDKs for Global AI Scaling

Flagsmith provides a unified API and SDK experience that makes it easy to manage flags across web, mobile, and server-side AI. For companies building 'omni-channel' AI agents, Flagsmith ensures that a feature enabled on the web is instantly reflected on the mobile app.

Why Flagsmith?

- Open Source Core: Start free and scale as you grow.

- Remote Config: Change AI prompt templates or model parameters without a code deploy.

- Simplicity: One of the easiest dashboards for non-technical product managers to use.

10. Flipper Cloud: Streamlined Developer Control

Flipper Cloud is the managed version of the popular Ruby-based Flipper gem, but it has expanded to support multiple languages in 2026. It is the 'no-nonsense' choice for developers who want to get dynamic AI feature toggles up and running in minutes without a steep learning curve.

While it lacks the deep statistical analysis of Statsig, it excels at providing a reliable, centralized dashboard for 'vibe coding' projects and internal AI tools.

The Anthropic Impact: Why Feature Flagging for LLM Apps is Mandatory

The recent Reddit discourse surrounding Anthropic's 'Legal Skills' release is a cautionary tale. When Anthropic released its MCP (Model Context Protocol) and specialized skills, the market panicked because it threatened to 'destroy' specialized legal tech wrappers like Harvey and Legora.

However, the more nuanced take from industry experts is that these wrappers aren't dead—they are just being forced to provide more value than a simple UI. As one Reddit user pointed out:

"The gap isn’t model quality—it’s workflow + verification + enterprise controls + consistency. Our team does not buy ‘capability.’ We buy repeatable work product we can rely on under pressure."

This is where AI feature flag tools 2026 become the 'moat.' If you are building a specialized AI tool, you use feature flags to: - Verify Outputs: Gate AI responses through a human-in-the-loop (HITL) flag for high-stakes legal or medical tasks. - A/B Test Prompts: Use flags to compare 'Prompt A' vs 'Prompt B' across thousands of real-world cases. - Shadow Rollouts: Run a new model in 'shadow mode'—where it generates responses in the background but doesn't show them to users—to verify accuracy against your legacy system.

Without these controls, you are at the mercy of the model providers. With them, you have a defensible, enterprise-grade platform.

How to Implement Dynamic AI Feature Toggles

Implementing dynamic AI feature toggles requires a shift in how we think about code. Here is a high-level technical blueprint for an agentic rollout in 2026.

Step 1: Define the Telemetry Metric

Don't just look at CPU/RAM. For AI, you need to monitor 'Semantic Drift' or 'Cost per Successful Task.'

Step 2: Set the Agentic Trigger

Use a tool like LaunchDarkly or Statsig to create a trigger.

python

Example of an Agentic Rollback Trigger in Python

if feature_flags.is_enabled("new-legal-reasoning-agent", user_id): try: response = ai_agent.execute_task(legal_doc) if response.hallucination_score > 0.1: # Auto-report and trigger local fallback statsig.log_event("hallucination_detected", response.id) return legacy_process(legal_doc) except LatencyException: # Dynamic toggle to a faster model return fast_model.execute_task(legal_doc)

Step 3: The Shadow Rollout

Before going 100% live, use a feature flagging for LLM apps strategy called 'Shadowing.' Send the user request to both the old and new AI agents. Compare the results. If the new agent's output is consistently 'better' (based on your internal LLM-as-a-judge), the feature flag tool can automatically increase the rollout percentage from 5% to 20%.

Key Takeaways

- Agentic is the Standard: In 2026, feature flags are no longer manual; they are closed-loop systems that respond to AI performance metrics.

- Data Sovereignty Matters: For industries like legal and healthcare, self-hosted tools like Unleash are mandatory for AI Act compliance.

- Wrappers Must Evolve: As Anthropic and OpenAI move down the stack, feature flagging is the key to building the 'verification architecture' that keeps specialized tools relevant.

- LaunchDarkly & Statsig Lead: These remain the 'Big Two' for enterprise-grade automated feature rollouts AI.

- Mobile AI Needs ConfigCat: For Android teams, the ability to isolate and remotely kill buggy AI features is a critical safety net.

Frequently Asked Questions

What are AI feature flag tools?

AI feature flag tools are software platforms that allow developers to remotely enable or disable application features. In 2026, these tools have evolved to include 'agentic' capabilities, automatically managing rollouts based on LLM performance, cost, and hallucination rates.

How does agentic feature management differ from traditional flagging?

Traditional flagging is manual and binary (on/off). Agentic feature management uses AI agents to monitor real-time telemetry and autonomously adjust feature states, roll back buggy updates, or swap AI models to optimize for cost or latency without human intervention.

Why is feature flagging critical for LLM apps?

LLMs are non-deterministic, meaning they can produce different results for the same input. Feature flagging allows teams to gate these outputs, perform A/B testing on prompts, and ensure that a model update doesn't lead to mass hallucinations or runaway API costs.

Can feature flags help with AI Act compliance in Europe?

Yes. Tools like Unleash allow for self-hosting and strict data governance, ensuring that sensitive legal or personal data used in AI workflows never leaves a specific jurisdiction, which is a key requirement of the EU AI Act.

Which tool is best for small AI startups in 2026?

For startups, Flagsmith or GrowthBook (open source) offer the best balance of power and cost. If the startup is mobile-focused, ConfigCat is the recommended choice for its ease of use on Android and iOS.

Conclusion

The software landscape of 2026 is a battlefield of 'vibe coding' and agentic loops. As we've seen from the market reaction to Anthropic's latest 'skills,' the line between a revolutionary feature and a company-ending bug is thinner than ever. By implementing one of the 10 best AI feature flag tools, you aren't just managing code; you're building a resilient, self-healing infrastructure capable of surviving the next wave of AI disruption.

Whether you're an enterprise giant using LaunchDarkly to manage global rollouts or a specialized legal tech firm using Unleash to stay compliant, the message is clear: Automate your agentic rollouts today, or be rolled over tomorrow.

Ready to optimize your deployment pipeline? Check out our latest guides on Developer Productivity and AI-Native DevOps at CodeBrewTools.