In 2026, the era of blind reliance on cloud-based AI APIs has officially ended. For the modern enterprise and the privacy-conscious developer, the local LLM server is no longer a luxury—it is a requirement for data sovereignty and cost efficiency. With the release of open-weight frontier models like Llama 4, GPT-OSS 120B, and Qwen3-Coder-480B, the performance gap between local hardware and proprietary cloud services has narrowed to a razor-thin margin. If you are still sending sensitive proprietary data to a third-party server in 2026, you are not just risking a data breach; you are throwing away thousands of dollars in token costs that could have been amortized into a one-time hardware investment.

- The State of Local AI in 2026: Why Self-Hosting Wins

- Hardware Fundamentals: VRAM, Bandwidth, and Quantization

- Top 5 Software Stacks for Local LLM Servers

- The 10 Best Hardware Stacks for Private AI (Entry-Level to Enterprise)

- The 2026 Model Leaderboard: What to Run Locally

- Step-by-Step: Deploying Your Local Inference Server

- Security and Hardening: Protecting Your Private AI Infrastructure

- Key Takeaways

- Frequently Asked Questions

The State of Local AI in 2026: Why Self-Hosting Wins

Setting up a local LLM server in 2026 is a fundamentally different experience than it was just two years ago. The ecosystem has matured into a robust, high-performance industry that rivals traditional cloud providers. The shift is driven by three primary catalysts: Privacy, Predictability, and Performance.

The Privacy Mandate

As AI agents become more integrated into our daily workflows—reading our emails, analyzing our financial spreadsheets, and managing our codebases—the risk of data leakage has reached critical levels. A self-hosted LLM guide for 2026 emphasizes that local hosting is the only way to guarantee that your "context window" doesn't become someone else's training data.

Predictable Unit Economics

Cloud providers have moved toward complex, multi-tiered pricing models. In contrast, a local setup allows for 24/7 inference at the cost of electricity. For developers running agentic workflows that burn through millions of tokens per hour, the ROI on a dual-RTX 5090 setup is often realized in less than four months.

Zero Latency and Offline Resilience

Local models offer sub-100ms response times, bypassing the network round-trips that plague cloud APIs. This is critical for real-time applications like voice assistants or IDE autocomplete. As one senior engineer on Reddit noted, "Running things locally gives you way more control over speed and cost... the real value lately isn't just the model but how you orchestrate it."

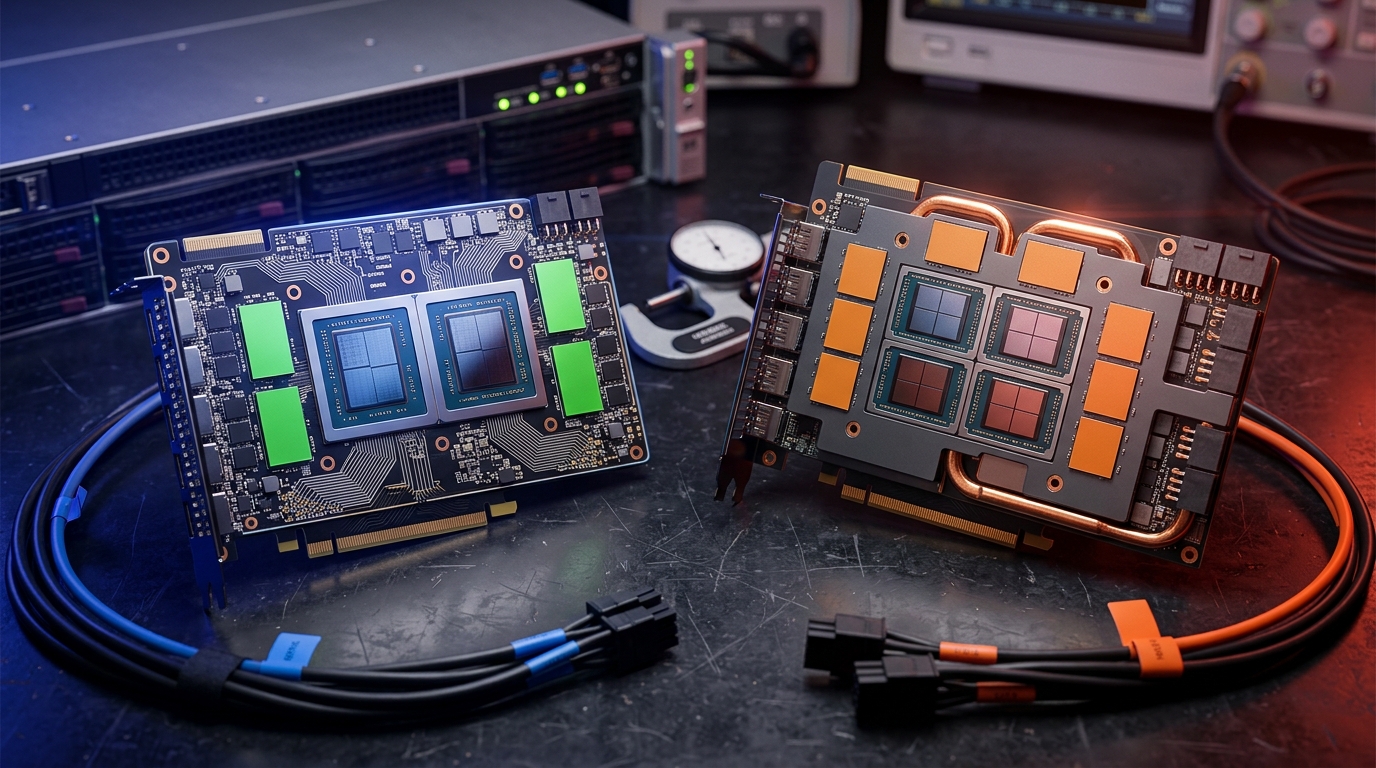

Hardware Fundamentals: VRAM, Bandwidth, and Quantization

Before selecting a stack, you must understand the holy trinity of local GPT hardware stacks: VRAM capacity, memory bandwidth, and quantization levels. In 2026, the bottleneck is almost never the CPU; it is the speed and size of your GPU's memory.

VRAM: The Hard Ceiling

If the model doesn't fit in VRAM, it runs on the CPU. If it runs on the CPU, it is (usually) too slow for a ChatGPT-like experience. - 8GB - 16GB: Entry-level. Suitable for 7B to 14B models at 4-bit quantization. - 24GB - 48GB: The sweet spot. Runs 30B to 70B models comfortably. - 96GB+: The professional tier. Required for 100B+ models like GPT-OSS or Qwen3-Coder.

Memory Bandwidth: The Speed Limit

This determines how many tokens per second (t/s) your server can generate. A consumer card like the RTX 4090 offers ~1TB/s, while the newer RTX 5090 pushes closer to 1.8TB/s. Enterprise cards like the H100 or B200 provide multiple terabytes of bandwidth, essential for serving multiple concurrent users.

The Magic of Quantization (GGUF, EXL2, AWQ)

Quantization is the process of compressing model weights (e.g., from 16-bit to 4-bit) to save VRAM. In 2026, 4-bit quantization (Q4_K_M) is considered the industry standard, offering a 70% reduction in memory usage with less than a 2% degradation in perplexity (intelligence).

Top 5 Software Stacks for Local LLM Servers

Software orchestration is where the "mini-DevOps" nightmare begins. Choosing the right backend is critical for a stable private AI infrastructure.

| Software | Best For | Key Advantage | OS Support |

|---|---|---|---|

| Ollama | Personal Use | Zero-config, one-line CLI setup. | Win/Mac/Linux |

| vLLM | Production/API | PagedAttention, high throughput, multi-GPU. | Linux |

| LM Studio | Beginners/Discovery | Polished GUI, easy model benchmarking. | Win/Mac |

| LocalAI | Enterprise Integration | Drop-in replacement for OpenAI API. | Docker/Linux |

| Open WebUI | Interface/UX | Best ChatGPT-like UI with RAG support. | Docker |

1. Ollama: The Fast-Path Champion

Ollama remains the king of simplicity. With a single command (ollama run llama4), you can have a frontier model running in seconds. It handles the quantization and GPU offloading automatically, making it the ideal starting point for any self-hosted LLM guide 2026.

2. vLLM: The Production Workhorse

For those serving multiple users or building apps, vLLM is the gold standard. It utilizes PagedAttention, which manages KV cache memory so efficiently that it can serve 10x more requests per second than standard backends. It is the core of most AI inference server solutions in 2026.

3. Open WebUI: The Front-End King

If you want a private ChatGPT clone, Open WebUI is the answer. It supports RAG (Retrieval-Augmented Generation), allowing you to upload PDFs and documents for the LLM to analyze locally. It also integrates seamlessly with Ollama and vLLM backends.

The 10 Best Hardware Stacks for Private AI (Entry-Level to Enterprise)

Selecting the right local GPT hardware stacks requires balancing your budget against the parameter count of the models you intend to run.

1. The Budget Hobbyist (RTX 4060 Ti 16GB)

- Cost: ~$450

- Capabilities: Runs Llama 4 8B or Gemma 3 9B at high speeds.

- Verdict: The cheapest way to get 16GB of VRAM. Great for coding assistants and basic chat.

2. The Prosumer Sweet Spot (RTX 3090/4090 24GB)

- Cost: $700 (Used 3090) - $1,800 (New 4090)

- Capabilities: Runs 30B models at Q8 or 70B models at Q4.

- Verdict: The gold standard for local LLM enthusiasts. The 24GB VRAM allows for substantial context windows.

3. The Dual-GPU Powerhouse (2x RTX 3090/4090)

- Cost: $1,500 - $3,600

- Capabilities: 48GB total VRAM. Runs 70B models at Q8 or 120B models at Q3.

- Verdict: Best value for high-end reasoning. Using NVLink (on 3090s) or PCIe 4.0/5.0, you can split models across both cards.

4. The Mac Studio Advantage (M3/M4 Ultra 192GB RAM)

- Cost: $5,000+

- Capabilities: 192GB of Unified Memory.

- Verdict: While slower than NVIDIA GPUs, the massive unified memory allows you to run enormous models (like Qwen3 235B) that would otherwise require $20k+ in enterprise GPUs.

5. The Workstation King (RTX 6000 Ada 48GB)

- Cost: ~$7,000

- Capabilities: Professional-grade drivers, 48GB VRAM, low power draw.

- Verdict: Ideal for corporate environments where stability and warranty are more important than raw gaming performance.

6. The 4-GPU Deep Learning Rig (4x RTX 3090 24GB)

- Cost: ~$4,000 (Used)

- Capabilities: 96GB VRAM. This is the entry point for running GPT-OSS 120B at usable speeds (15-20 t/s).

- Verdict: Requires a specialized motherboard (like a Threadripper setup) and a 1600W+ PSU.

7. The Enterprise Inference Node (NVIDIA L40S 48GB)

- Cost: ~$10,000

- Capabilities: Designed for data centers. High reliability and massive throughput for vLLM clusters.

- Verdict: The standard for mid-sized companies hosting their own internal AI agents.

8. The Sovereign Cloud (NVIDIA H100 80GB)

- Cost: $30,000+

- Capabilities: The industry standard for training and high-speed inference.

- Verdict: Overkill for most, but necessary for those training their own LoRAs or serving thousands of concurrent requests.

9. The Future-Proof Build (RTX 5090 32GB)

- Cost: ~$2,000

- Capabilities: 32GB VRAM and next-gen memory bandwidth.

- Verdict: In 2026, this card is the new baseline for "High-End" local AI, finally making 70B models run at lightning speeds.

10. The Rackmount Titan (8x H100/B200)

- Cost: $250,000+

- Capabilities: Total AI dominance. Can host multiple instances of 400B+ parameter models.

- Verdict: For tech giants and research institutions only.

The 2026 Model Leaderboard: What to Run Locally

Hardware is useless without the right weights. Based on 2026 benchmarks, these are the top models for your local LLM server.

1. GPT-OSS 120B (The Reasoning King)

Released as an open-weight gift to the community, this model is the closest experience to ChatGPT-5. It excels at complex planning, logic, and nuanced conversation. Requires at least 80GB of VRAM for a decent experience.

2. Llama 4 70B (The All-Rounder)

Meta’s flagship remains the most compatible and well-supported model. It is the "default" choice for general-purpose assistants. It runs beautifully on a dual-3090 setup.

3. Qwen3-Coder-30B (The Developer's Choice)

For coding, Qwen3-Coder is unrivaled. It consistently beats much larger models in Python and Rust benchmarks. Its 30B size means it fits on a single RTX 4090 with room for a large context window.

4. Gemma 3 27B (The Multimodal Specialist)

Google's open release is highly optimized for vision and creative writing. It has a "human-like" feel that many find superior to the more clinical Llama models.

5. DeepSeek V3.2-Exp (The Logic Engine)

Known for its "Thinking Mode," DeepSeek is the go-to for math and debugging. It uses a Mixture-of-Experts (MoE) architecture, making it incredibly fast despite its high parameter count.

Step-by-Step: Deploying Your Local Inference Server

Ready to build? Follow this high-level guide to setting up a production-ready local LLM server using Linux and Docker.

Step 1: Hardware Assembly and OS

Install Ubuntu 24.04 LTS. It has the best driver support for NVIDIA GPUs. Ensure your BIOS has IOMMU and Above 4G Decoding enabled if using multiple GPUs.

Step 2: Install NVIDIA Container Toolkit

This allows Docker to access your GPUs. bash sudo apt-get update sudo apt-get install -y nvidia-container-toolkit sudo nvidia-ctk runtime configure --runtime=docker sudo systemctl restart docker

Step 3: Deploy vLLM via Docker

Use vLLM to serve your model as an OpenAI-compatible API. bash docker run -d --gpus all \ -v ~/.cache/huggingface:/root/.cache/huggingface \ -p 8000:8000 \ vllm/vllm-openai \ --model meta-llama/Llama-4-70B-Instruct \ --tensor-parallel-size 2

(Note: --tensor-parallel-size 2 splits the model across two GPUs.)

Step 4: Add the UI (Open WebUI)

Connect your front-end to the vLLM backend. bash docker run -d -p 3000:8080 \ -e OPENAI_API_BASE_URL=http://your-ip:8000/v1 \ -e OPENAI_API_KEY=unused \ --name open-webui \ ghcr.io/open-webui/open-webui:main

Security and Hardening: Protecting Your Private AI Infrastructure

Hosting a local LLM server opens up new attack vectors. If your server is accessible via the web, you must harden it.

- VPN Access Only: Never expose your LLM API (port 8000) or WebUI (port 3000) directly to the internet. Use Tailscale or WireGuard for secure remote access.

- Container Isolation: Run your models in Docker containers with limited privileges. This prevents a "prompt injection" attack from accessing your host file system.

- Lakera Guard: Consider using a security layer like Lakera Guard to filter for prompt injections and PII (Personally Identifiable Information) leaks before they reach your model.

- Regular Updates: Open-source AI tools move fast. A vulnerability in

llama.cpporvLLMcould be patched within hours; ensure you are pulling the latest images weekly.

"The infrastructure part is what people don't talk about enough. Running a model locally for yourself is one thing, but once you start dealing with multiple requests... it turns into a small DevOps setup." — r/LLMDevs Discussion

Key Takeaways

- VRAM is King: Your hardware choice should be dictated by the VRAM requirements of the models you want to run. 24GB is the minimum for serious use.

- Ollama for Ease, vLLM for Speed: Use Ollama for your personal desktop and vLLM for serving applications or multiple users.

- Quantization is Essential: Don't fear 4-bit quants. They are the only way to run powerful models on consumer hardware without significant intelligence loss.

- Privacy is the Product: The primary value of a local LLM server is the total control over your data and the elimination of recurring subscription fees.

- Hybrid is the Future: Most teams will land on a hybrid setup—local models for sensitive/high-volume tasks and cloud APIs for the absolute bleeding-edge reasoning when needed.

Frequently Asked Questions

Can I run a local LLM server on a standard laptop?

Yes, but with limitations. A modern laptop with an Apple M3/M4 chip or an NVIDIA RTX 40-series GPU can run smaller models (7B-14B) quite well. For larger models, you will experience slow "tokens per second" that may feel sluggish compared to ChatGPT.

What is the best GPU for a local LLM server in 2026?

For most users, the RTX 5090 (32GB) or a used RTX 3090 (24GB) offers the best price-to-performance ratio. For professional use, the RTX 6000 Ada or L40S is preferred for reliability and VRAM capacity.

Do I need an internet connection to use my local LLM?

No. Once the model weights are downloaded, a local LLM server can function entirely offline. This makes it ideal for secure environments or remote locations.

How does a local LLM compare to ChatGPT-4o or GPT-5?

In 2026, the best open-weight models (like GPT-OSS 120B or Llama 4 400B) are at parity with or exceed the reasoning capabilities of GPT-4o. However, cloud models still hold a slight edge in "world knowledge" and integrated tool access (like live web browsing).

Is it legal to use these models for business?

Most modern open models use the Apache 2.0 or a modified Llama 3 Community License, which allows for commercial use up to a certain number of monthly active users (usually 700 million). Always check the specific LICENSE file on Hugging Face before deploying.

Conclusion

Building a local LLM server in 2026 is an investment in your digital autonomy. Whether you are a developer looking to automate your coding workflow with Qwen3-Coder, or an enterprise securing your intellectual property with Private AI Infrastructure, the tools and hardware have finally reached a point of seamless integration.

Stop paying for tokens you don't own and start building a stack that you do. The transition from "AI as a service" to "AI as infrastructure" is here. By following this guide and selecting the right hardware/software combination, you can ensure your AI remains fast, free, and—most importantly—private.

Ready to scale your AI capabilities? Check out our latest reviews on SEO tools and developer productivity frameworks to see how local AI can supercharge your output.