In 2026, the 'Generalist AI' is dead. As the LocalLLaMA community recently noted, we have officially crossed the rubicon where local-first AI is no longer a niche hobby but the default for developers. With models like Qwen 3 Coder 80B and specialized 4B variants running natively on smartphones, the industry has shifted from 'bigger is better' to 'smarter is merged.' AI model merging frameworks have become the primary vehicle for this revolution, allowing engineers to blend specialized weights into high-performance Mixture of Experts (MoE) models without the multi-million dollar price tag of traditional fine-tuning. If you aren't merging, you're overpaying for compute.

The Rise of Local AI and the Merging Revolution

By early 2026, the economic landscape of AI changed. Big labs began pushing tighter APIs and heavier pricing structures, leading privacy-sensitive industries—law, healthcare, and finance—to pivot toward local RAG setups and custom-built models. As one Reddit user pointed out, "The hardware cost argument keeps coming up, but there's a middle ground... a cheap VPS with decent RAM lets you run quantized 30B models 24/7."

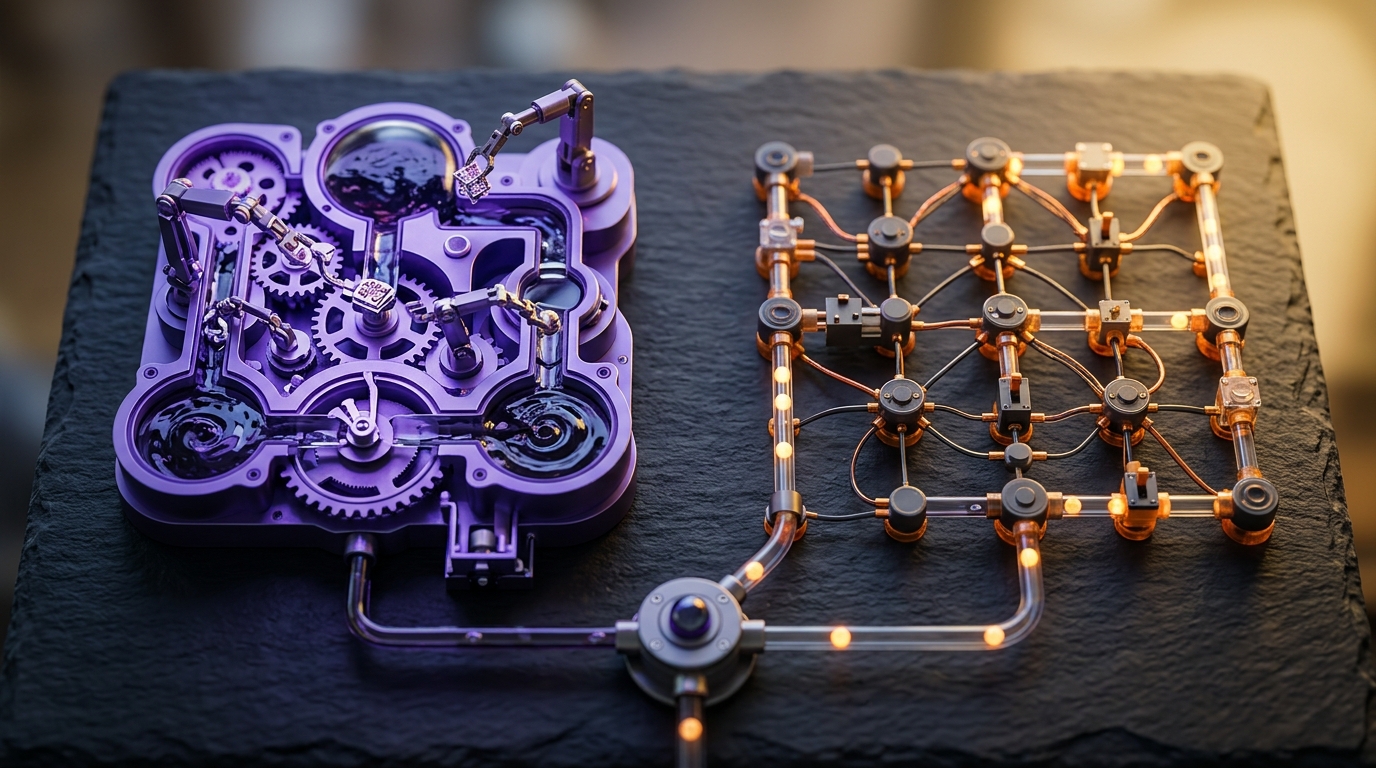

This shift necessitated a way to create models that don't try to be 'all things to all people.' Why does a coding assistant need to know the air wingspeed velocity of a swallow or quotes from Tony Hancock? AI model merging frameworks allow us to strip away the 'dilution' of general knowledge and build specialized experts. By merging a 4B coding model with an 8B reasoning model, developers are creating 'Frankenmerges' that outperform 70B generalist models on specific tasks.

What is Model Merging? Task Arithmetic Explained

Model merging is the process of combining two or more Large Language Models (LLMs) by performing arithmetic operations on their weights. Unlike traditional ensembling, which runs models in parallel, merging creates a single unified checkpoint.

At the heart of this is Task Arithmetic. A 'task vector' is calculated by subtracting the base model weights from a fine-tuned model's weights:

task_vector = fine_tuned_weights - pretrained_weights

This vector represents the 'knowledge delta.' By adding multiple task vectors back to a base model, you can create a multi-tasking powerhouse. In 2026, this technique is 5-100x faster than multi-task fine-tuning and requires significantly less VRAM.

1. MergeKit: The Industry Standard

Maintained by Arcee AI, MergeKit remains the most powerful and versatile tool in the merging ecosystem. It has facilitated the creation of legendary models like MythoMax and Goliath.

MergeKit's primary strength is its out-of-core approach, which allows users to perform merges on hardware with as little as 8GB of VRAM or even entirely on a CPU. In 2026, its mergekit-tokensurgeon feature has become essential for transplanting tokenizers between models, enabling speculative decoding and cross-tokenizer knowledge distillation.

- Best for: High-fidelity weight blending and production-grade checkpoints.

- Key Feature: Support for SLERP, TIES, DARE, and Passthrough methods.

2. Mergenetic: Evolutionary Optimization

As manual experimentation became too time-consuming, evolutionary model merging software like Mergenetic stepped in. Mergenetic uses evolutionary algorithms to automatically discover the optimal 'merging recipe.'

Instead of guessing weights (e.g., 50% Model A, 50% Model B), Mergenetic initializes a population of different merging configurations, tests them against benchmarks like GSM8K or HumanEval, and 'evolves' the best performers. This mirrors the research published in Nature Machine Intelligence in early 2025, which proved that evolutionary merges could create hybrid models with mathematical capabilities exceeding those of models twice their size.

3. MERGE³: The GPU-Efficient Contender

One of the biggest MergeKit alternatives 2026 is MERGE³. Its claim to fame is a 50x reduction in fitness computation costs. While traditional evolutionary merging requires massive compute to evaluate each 'child' model, MERGE³ uses lightweight fitness estimators. This makes evolutionary merging feasible on a single consumer GPU, like the RTX 4090 or even the Mac M4 Pro with 48GB of unified memory.

4. RabbitLLM: Breaking the VRAM Barrier

Emerging from the LocalLLaMA community, RabbitLLM addresses the silent gatekeeper: hardware prices. RabbitLLM allows for the creation of models limited by layer size rather than total model size. This is a game-changer for 'layer stacking' or passthrough merges. By intelligently offloading inactive layers during the merging process, RabbitLLM enables the creation of 30B+ models on laptops that would otherwise choke on the parameter count.

5. Gumloop: No-Code Agentic Merging

For those looking for a LLM merging tutorial without the Python headache, Gumloop offers a visual drag-and-drop interface. While primarily an agent framework, Gumloop’s 'Agentic Orchestration' allows users to treat different merged models as nodes in a workflow. You can effectively 'merge' the outputs of a specialized 4B model and an 8B model in real-time, creating a modular MoE system that functions as a single unit.

6. StackAI: Enterprise-Grade MoE Orchestration

StackAI is the go-to for regulated industries like finance and healthcare. It excels at building internal custom mixture of experts setups. StackAI provides a secure environment for merging domain-specific models (e.g., a legal-tuned Llama 3) with general reasoning bases. Its focus on SOC2 and HIPAA compliance makes it the only choice for enterprises that need the power of merged models without exposing data to public APIs.

7. CrewAI: Multi-Agent Weight Blending

CrewAI has evolved from a simple agent framework into a sophisticated multi-agent orchestration system. In the context of merging, CrewAI allows a 'crew' of specialized models to share context and task delegation. By using CrewAI, developers can simulate a Mixture of Experts model where each 'expert' is a separate, merged model optimized for a specific role (Researcher, Coder, Reviewer).

8. LangGraph: Stateful Merging Logic

Part of the LangChain ecosystem, LangGraph is the best tool for building complex, stateful logic into your merged systems. If you are building a custom mixture of experts guide, LangGraph is what you use to define the 'Router.' It decides which merged expert should handle a specific prompt based on the state of the conversation, effectively acting as the 'brain' of a distributed MoE.

9. SMM-Bench Tools: Performance Validation

Merging is useless without benchmarking. SMM-Bench (September 2025) introduced formal continuous search spaces for hyperparameter tuning. Tools built on this benchmark allow you to optimize the Data Flow Space (DFS). Instead of just merging weights, these tools optimize the inference path tokens follow through the network, ensuring that your merged model doesn't suffer from 'catastrophic forgetting' of its base knowledge.

10. Zylos AutoMerger: Research-Grade Automation

Zylos Research’s AutoMerger is a specialized framework that focuses on Task Vector Negation. This is used to 'unlearn' unwanted behaviors (like hallucinations or toxic patterns) by subtracting a 'bad behavior' task vector from a model. It’s a surgical approach to merging that is gaining massive traction in the safety-critical AI sector of 2026.

Core Merging Techniques: SLERP, TIES, and DARE

To build a truly elite model, you must understand the three 'Kings' of merging techniques in 2026:

SLERP (Spherical Linear Interpolation)

Traditional linear interpolation often results in a 'dilution' of features. SLERP interpolates between parameters on a spherical plane, preserving the high-dimensional geometry of the weights. * Use case: Merging two high-quality models of the same base (e.g., two different Llama 3.2 8B tunes).

TIES-Merging (Trim, Elect, Sign)

When merging multiple models, parameter interference is a nightmare. TIES-Merging resolves this in three steps: 1. Trim: Keep only the top-k% most significant changes. 2. Elect Sign: Resolve conflicts where one model says 'increase weight' and another says 'decrease.' 3. Merge: Combine the elected parameters.

DARE (Drop And Rescale)

DARE is the most 'magical' technique. It randomly drops 90-99% of the delta parameters (task vector components) and rescales the remaining ones. Research shows that models often have massive redundancy; DARE strips away the noise, allowing for cleaner merges with less interference.

| Technique | Best For | Complexity |

|---|---|---|

| SLERP | Pairwise Merging | Low |

| TIES | 3+ Model Merging | Medium |

| DARE | Reducing Interference | Medium |

| Passthrough | Exotic Architectures | High |

Hardware Requirements: Managing the RAM Bottleneck

As the Reddit thread "Is 2026 the Year Local AI Becomes the Default?" highlighted, RAM is the silent gatekeeper. While you can merge models on a CPU, running them requires VRAM or fast Unified Memory.

- The Budget Path: A cheap VPS with 64GB of RAM can run a merged 30B model for ~$22/month.

- The Power User Path: An Apple M4 Pro with 48GB-64GB of unified memory is the current 'gold standard' for local inference and merging.

- The Developer Path: Dual RTX 3090/4090 setups (48GB VRAM total) are required for training-heavy merging tasks or running unquantized 70B+ merges.

"The real win isn’t shrinking knowledge—it’s building modular systems where small models + tools handle precision without hallucinating." - LocalLLaMA expert perspective.

Key Takeaways

- Model merging is the most cost-effective way to build specialized LLMs in 2026, often 100x faster than fine-tuning.

- MergeKit is the essential toolkit, but Mergenetic and MERGE³ are leading the way in automated evolutionary optimization.

- DARE and TIES are critical techniques for merging 3+ models without performance degradation.

- Local AI has become the default for privacy-sensitive industries, driven by the ability to run merged 8B-30B models on consumer hardware.

- Task Arithmetic allows developers to treat model capabilities like Lego bricks—adding coding skills and subtracting hallucinations.

Frequently Asked Questions

What is the best AI model merging framework for beginners?

MergeKit is the best starting point due to its massive community support and extensive documentation. If you prefer a visual interface, Gumloop offers a no-code way to orchestrate multiple models into a single workflow.

Can I merge models with different architectures (e.g., Mistral and Llama)?

In 2026, this is still largely experimental. Most successful merges are homogeneous, meaning they use the same base model. However, 'Passthrough' or 'Frankenmerging' allows you to stack layers from different models, though it often requires extensive trial and error to maintain coherence.

How much VRAM do I need for model merging?

One of the benefits of modern frameworks like MergeKit is their 'out-of-core' processing. You can merge 70B models on as little as 8GB of VRAM by offloading the process to your system RAM or CPU. However, running the resulting model will require significantly more (e.g., 40GB+ for a 70B model at 4-bit quantization).

What is a 'Frankenmerge'?

A Frankenmerge (or Passthrough merge) is a model created by stacking layers from different models or repeating layers from the same model to create a higher parameter count (e.g., creating a 10B model from a 7B base). While powerful, they can be 'flaky' if the transition between layers isn't optimized.

Is model merging better than fine-tuning?

Merging is not 'better,' but it is more efficient. Fine-tuning is necessary to teach a model entirely new data or a very specific format. Merging is best for combining existing capabilities from different fine-tuned models. Many top-tier models in 2026 use a 'Merge-then-Finetune' approach.

Conclusion

The ability to build custom MoE models through AI model merging frameworks has democratized artificial intelligence. No longer are the 'frontier' capabilities locked behind the gated walls of Big Tech APIs. By leveraging tools like MergeKit, Mergenetic, and techniques like DARE, any developer with a decent laptop can build a world-class, domain-specific assistant.

As we move further into 2026, the focus will continue to shift toward modular, agentic systems where merged models act as specialized experts. Start experimenting with task vectors today—the future of AI isn't one giant model; it's a thousand perfectly merged ones.

Ready to scale your local AI? Explore our latest guides on developer productivity and the best SEO tools to stay ahead of the curve.