By 2026, the 'honeymoon phase' of public AI APIs is officially over. According to McKinsey’s 2025 State of AI report, 78% of organizations now use AI in at least one business function, but a massive shift is occurring: the migration from public hyperscalers to AI-native private cloud environments. Why? Because in a world where data is the new oil, the US CLOUD Act and jurisdictional overreach have turned public clouds into a liability for sensitive IP. If you are building a sovereign RAG stack or managing private LLM hosting in 2026, you aren't just looking for storage; you are looking for an ironclad fortress that doesn't sacrifice the 'LPU' speeds and GPU densities required for modern inference.

- The Sovereignty Crisis: Why Private AI is No Longer Optional

- Architecting the Sovereign RAG Stack: Components of a 2026 AI Infrastructure

- Top 10 AI-Native Private Cloud Platforms for 2026

- Private LLM Hosting 2026: Quantization and the RTX 4090 Revolution

- On-Premise AI Cluster Management: Proxmox vs. Pextra vs. OpenStack

- The Cost of Autonomy: Benchmarking Managed vs. Self-Hosted AI

- Security & Compliance: Auditing Your Private AI Cloud

- Key Takeaways

- Frequently Asked Questions

The Sovereignty Crisis: Why Private AI is No Longer Optional

In early 2026, the industry hit a breaking point. High-profile cases, such as the Canadian court ordering OVH to provide data despite French law, have proven that physical location is not enough to guarantee data safety. For an enterprise AI cloud platform, sovereignty now means operational autonomy.

True sovereignty requires that the software stack, the administrative personnel, and the legal jurisdiction all align within a single secure boundary. This is particularly critical for sovereign AI infrastructure where the models themselves—trained on proprietary corporate data—represent the company's core value. If a third-party provider can be compelled by a foreign government to 'snapshot' a running VM, your entire competitive advantage could vanish in a single legal subpoena.

Furthermore, the 'messy middle' of hybrid cloud has become the default. Engineering teams are no longer choosing between on-prem and cloud; they are demanding a single cloud operating model that can run on-premises for sensitive RAG (Retrieval-Augmented Generation) workloads and burst to sovereign regional clouds for massive training runs.

Architecting the Sovereign RAG Stack: Components of a 2026 AI Infrastructure

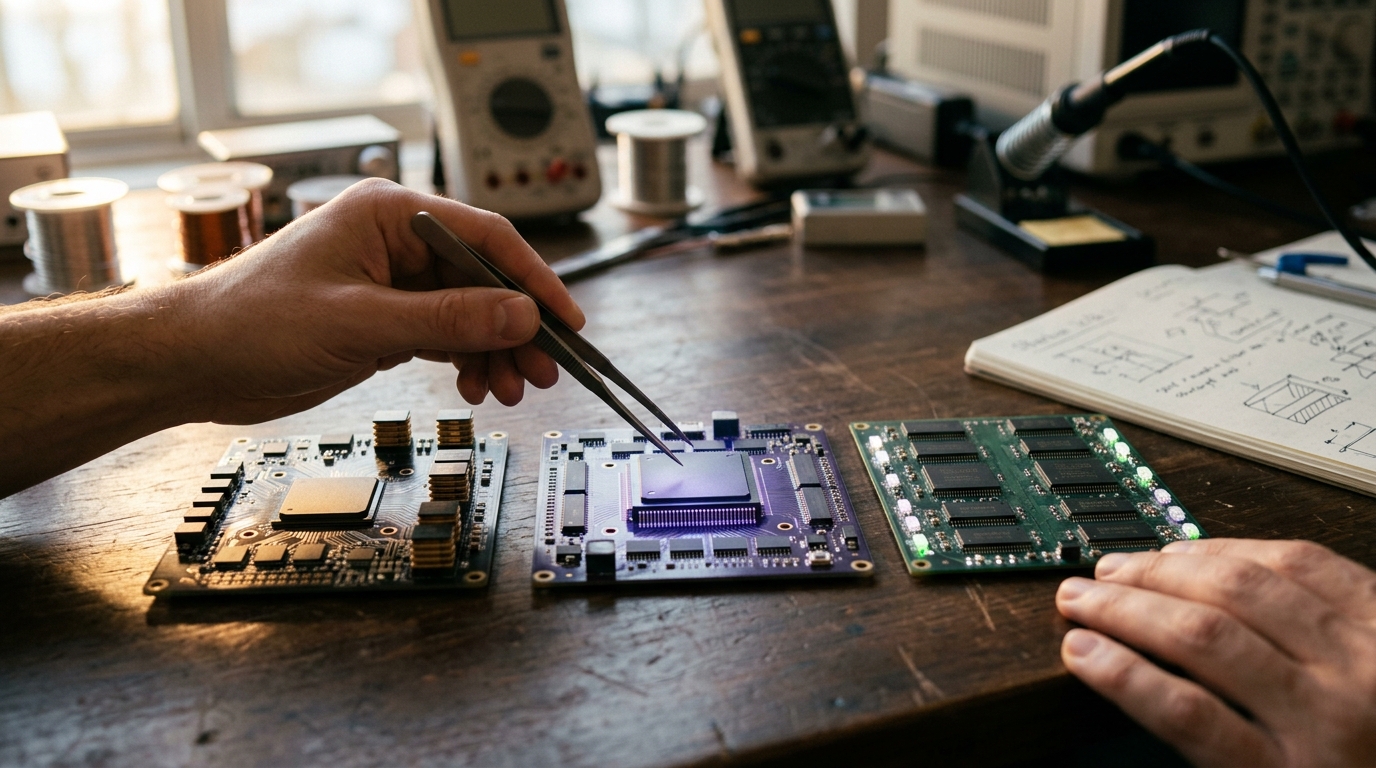

Building a sovereign RAG stack requires more than just a vector database. It requires a tightly integrated layer of compute, storage, and orchestration that prevents data leakage at every hop.

The Core Layers of 2026 Sovereign AI

- The Compute Layer: High-density GPU clusters (NVIDIA H200/B200) or specialized LPUs (Language Processing Units) like those from Groq, which offer sub-second inference for 70B+ parameter models.

- The Orchestration Layer: Kubernetes remains the king, but with AI-native extensions for GPU slicing and multi-tenant isolation.

- The Data Layer: High-IOPS storage (Ceph/ZFS) paired with vector databases that support 'Zero-Knowledge' encryption.

- The Model Layer: Quantized open-source models (Llama 4, Mistral NeMo) running on-premise to ensure no data ever leaves the VPC.

"We ended up looking at Groq for inference speed and it's genuinely impressive—the LPU hardware is a completely different experience compared to GPU-based options." — Reddit, r/webdevelopment 2026

Top 10 AI-Native Private Cloud Platforms for 2026

The following platforms have been selected based on their ability to deliver on-premise AI cluster management, hardware-accelerated inference, and jurisdictional sovereignty.

| Rank | Platform | Best For | Sovereign Boundary | AI/GPU Support |

|---|---|---|---|---|

| 1 | Civo | Cloud-Native Sovereignty | UK / EU / India | H100, B200, L40s |

| 2 | Pextra PCE | AI-Native Operations | Global (Self-Hosted) | Native AI Assistant |

| 3 | IBM Cloud | Financial Services | Global Dedicated | H200, Gaudi 3 |

| 4 | HPE GreenLake | On-Prem Consumption | Local (On-Prem) | B300 Blackwell |

| 5 | Nutanix | Hyperconverged AI | Local (On-Prem) | AHV-Native GPU |

| 6 | Red Hat OpenShift | Hybrid Portability | Vendor-Neutral | Multi-Cloud GPU |

| 7 | Oracle OCI | Database-Heavy AI | Distributed Regions | Exadata X11M |

| 8 | StackIT | German Sovereignty | EU (Germany) | Sovereign GPU |

| 9 | OVHcloud | European Value | EU (France/Canada) | NVIDIA H100 |

| 10 | OpenMetal | OpenStack Flexibility | US / Global | Dedicated Cores |

1. Civo: The Sovereign Kubernetes Powerhouse

Civo has emerged as the leader for organizations that need a sovereign RAG stack without the complexity of legacy enterprise software. Their CivoStack Enterprise allows you to run the exact same API-driven cloud stack on your own hardware as you do in their sovereign data centers. With zero egress fees and sub-90-second cluster provisioning, it is the fastest way to deploy private LLM hosting 2026.

2. Pextra CloudEnvironment (PCE): The AI-Native Disruptor

Pextra PCE is the first platform designed from the ground up with a native AI integration layer. Unlike Proxmox, which requires manual GPU passthrough and complex CLI work, PCE offers a modern React 19 UI with AI-powered automation built into the core. It is the perfect 'VMware-alternative' for teams that want to manage AI workloads without a dedicated sysadmin team.

3. IBM Cloud for Financial Services

IBM remains the gold standard for enterprise AI cloud platforms in regulated sectors. Their Hyper Protect Virtual Servers use processor-level cryptographic isolation, ensuring that even IBM administrators cannot see your data. For banks and healthcare providers, this is the ultimate E-E-A-T signal.

Private LLM Hosting 2026: Quantization and the RTX 4090 Revolution

One of the most significant shifts in 2026 is the democratization of hardware. While H100s are the enterprise standard, quantization has made consumer-grade hardware viable for production.

- Q4 Quantization: Industry standard in 2026. It provides ~90% of model quality at 25% of the VRAM cost.

- RTX 4090 Clusters: Using platforms like RunPod or local 'Living Room' servers, teams are running 70B parameter models on clusters of consumer GPUs for a fraction of the cost of A100s.

As noted in Reddit discussions, self-hosted RTX 4090s in the $0.79–$1.50/hr range are hitting a 'sweet spot' for startups that need high throughput but can't justify a $30k monthly AWS bill. However, for true sovereign AI infrastructure, these consumer setups must be wrapped in a secure private cloud layer like Pextra or Nutanix to prevent external access.

On-Premise AI Cluster Management: Proxmox vs. Pextra vs. OpenStack

Managing an AI cluster requires more than just a hypervisor; it requires a management plane that understands GPU scheduling and NVLink topologies.

Comparison of Management Stacks

- Proxmox VE: The community favorite. It is free and robust but lacks native multi-tenancy and AI-native automation. Great for homelabs, risky for multi-departmental enterprise use.

- Pextra PCE: Adds the 'True Multi-Tenancy' and ABAC (Attribute-Based Access Control) that Proxmox lacks. Its native AI chat assistant helps junior engineers manage complex networking and storage backends (Ceph/ZFS) without deep CLI knowledge.

- OpenStack (OpenMetal): The most powerful but the most complex. It is essentially a 'Build-Your-Own-AWS.' Best for organizations with massive engineering teams who want total control over every packet.

The Cost of Autonomy: Benchmarking Managed vs. Self-Hosted AI

Is a private LLM hosting 2026 strategy actually cheaper? The data suggests a 'U-shaped' cost curve.

- Low Volume: Managed APIs (OpenAI, Anthropic) are cheapest. No infra to manage.

- Mid Volume: Self-hosting on consumer GPUs (RTX 4090) or sovereign clouds like Civo becomes cheaper once you hit ~5 million tokens per day.

- High Volume: Enterprise private clouds (HPE GreenLake/IBM) provide the lowest TCO (Total Cost of Ownership) through fixed-price consumption models and optimized hardware utilization.

bash

Example: Deploying a Quantized Llama-4 on a Sovereign Node

docker run -d --gpus all -v ./models:/model \ -p 8080:8080 vllm/vllm-openai \ --model /model/llama-4-70b-q4.gguf \ --quantization awq \ --enforce-eager

Security & Compliance: Auditing Your Private AI Cloud

In 2026, a 'SOC 2' certificate is just the baseline. For sovereign AI infrastructure, you must demand Audit Rights. This allows your internal security team (or a third party) to physically or virtually inspect the provider's controls.

Critical Compliance Checkpoints

- Jurisdictional Governance: Is the provider headquartered in a country with a 'Mutual Legal Assistance Treaty' (MLAT) that could bypass your local laws?

- Operational Autonomy: Are the 'root' admins local citizens with appropriate security clearances?

- Exit Provisions: Can you extract your models and data in a standard format (e.g., QCOW2 or PXI) within 24 hours if you decide to leave?

Key Takeaways

- Sovereignty is Legal, Not Just Physical: Data residency is useless if the provider is subject to the US CLOUD Act.

- AI-Native is the New Standard: Platforms like Pextra and Civo are replacing legacy hypervisors by building AI automation into the core.

- Quantization Changes the Math: Q4 models allow production-grade AI to run on significantly cheaper hardware, making private clouds more ROI-positive.

- Hybrid is Default: The best platforms in 2026 allow you to move workloads seamlessly between your server room and a sovereign regional provider.

- Audit Rights are Mandatory: Never sign a private cloud contract in 2026 that doesn't grant you the right to verify security controls.

Frequently Asked Questions

What is an AI-native private cloud?

An AI-native private cloud is an infrastructure stack specifically designed to handle the unique demands of AI, including native GPU orchestration, built-in vector databases for RAG, and AI-powered management tools to simplify complex operations.

How does the US CLOUD Act affect European AI data?

The US CLOUD Act allows US authorities to compel US-based technology companies (like AWS or Google) to provide data stored on their servers, regardless of where that data is physically located (e.g., in a German data center). This is why sovereign EU providers like Hetzner or StackIT are preferred for sensitive IP.

Can I run a sovereign RAG stack on consumer GPUs?

Yes. With Q4 quantization, models like Llama-3 70B can run on a cluster of RTX 4090s. However, for enterprise reliability, these should be managed by a private cloud platform that handles high availability and secure networking.

What is the difference between private cloud and sovereign cloud?

A private cloud is dedicated infrastructure for one tenant. A sovereign cloud is a private cloud that also guarantees legal and jurisdictional independence, ensuring that no foreign government can access the data through legal loopholes.

Is Proxmox suitable for enterprise AI in 2026?

Proxmox is excellent for technical teams and homelabs, but it lacks the native multi-tenancy, AI assistants, and advanced ABAC security required by many large enterprises. Platforms like Pextra PCE or CivoStack are often preferred for production environments.

Conclusion

The transition to AI-native private cloud platforms in 2026 represents the ultimate 'maturation' of the AI industry. We are moving away from the 'wild west' of public APIs and toward a structured, secure, and sovereign future. Whether you are a defense contractor needing sovereign AI infrastructure or a fintech startup looking for private LLM hosting 2026, the tools now exist to keep your data under your own 'digital roof.'

Don't wait for a data breach or a subpoena to rethink your strategy. Start by auditing your current stack against the jurisdictional risks of 2026 and explore a pilot with sovereign leaders like Civo or Pextra today. Your IP is your future—keep it sovereign.